Has the AI transition met its first real resistance?

As the parasite class maps the future of work, workers themselves are quietly refusing to cooperate.

Earlier this week, OpenAI released a 13-page report entitled, “Industrial Policy for the Intelligence Age: Ideas to Keep People First,” outlining how governments should navigate the coming wave of AI disruption. The tone is not alarmist: change is coming, they say, but it can be managed. Workers will transition, systems will adapt, and innovation will continue.

Although it’s a policy paper laying out top-down solutions, the company makes all the right noises about involving the grassroots and soliciting public input.

The report says:

No one knows exactly how this transition will unfold. At OpenAI, we believe we should navigate it through a democratic process that gives people real power to shape the AI future they want, and prepare for a range of possible outcomes while building the capacity to adapt.

The AI future people want is one that doesn’t steal their livelihoods and leave them with no productive future. We know that because people are actually saying as much.

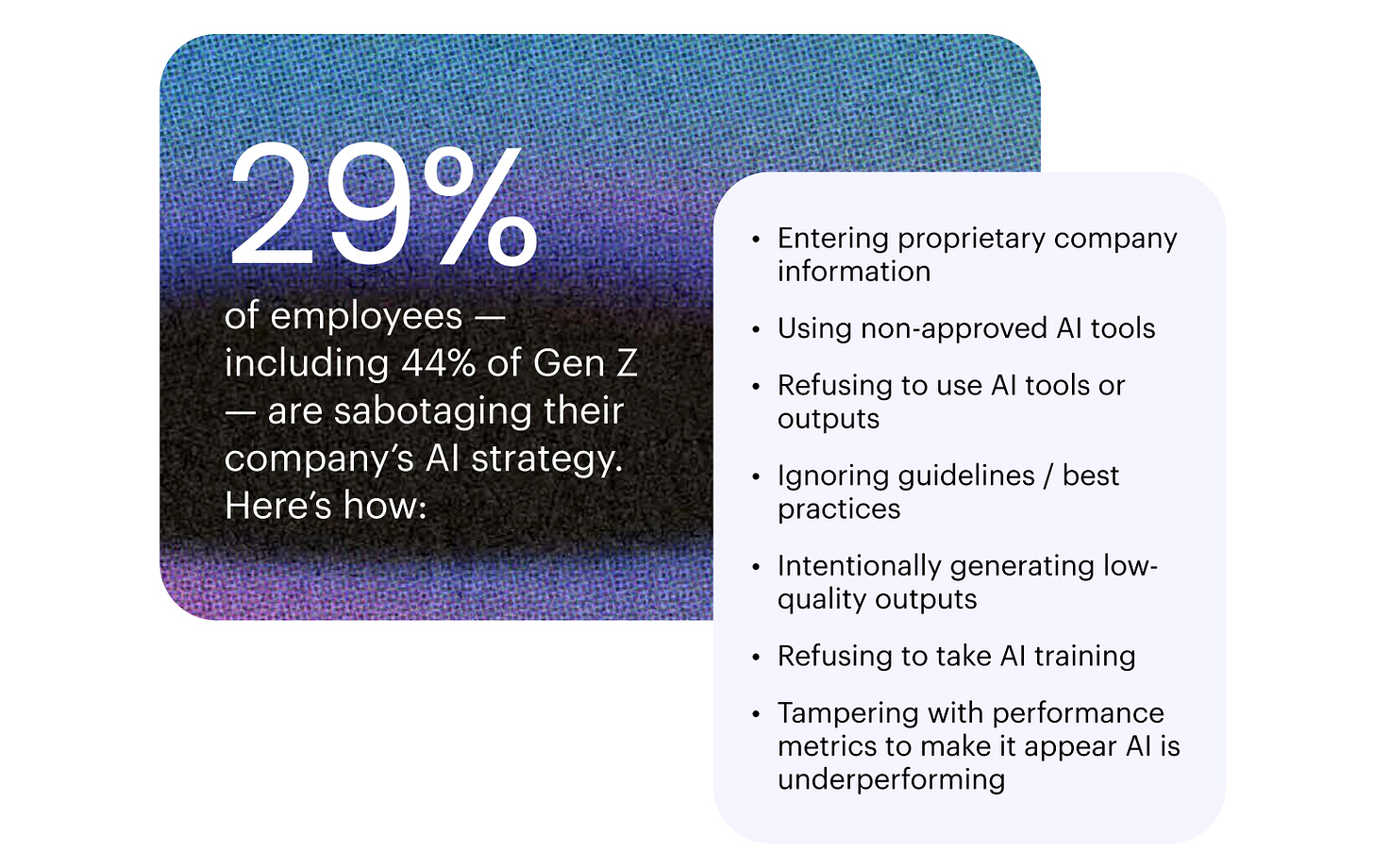

A new report from an enterprise AI agent firm called Writer, and research firm Workplace Intelligence, finds a significant share of employees are actively trying to sabotage their company’s AI rollout. The report surveyed 2,400 knowledge workers across the US, UK, and Europe. Half were C-suite executives and the other half were employees. Nearly one in three respondents admitted to sabotaging their company’s AI strategy, and among Gen Z workers that number jumps to as high as 44%.

Guests on the Collapse Life podcast often say eventually people will rise up and start protesting the ugly future being prepared for us. We wondered why it hadn’t happened already, but maybe we were just looking in the wrong place. People are not taking to the streets or organizing labor action. They’re just quietly ignoring AI systems, feeding them bad data, and deliberately undermining their outputs. So there may be reason for optimism after all.

Most AI policy rests on the simple assumption that adoption is inevitable. Once tools become efficient enough, organizations will deploy them, workers will adapt, and the system will move forward.

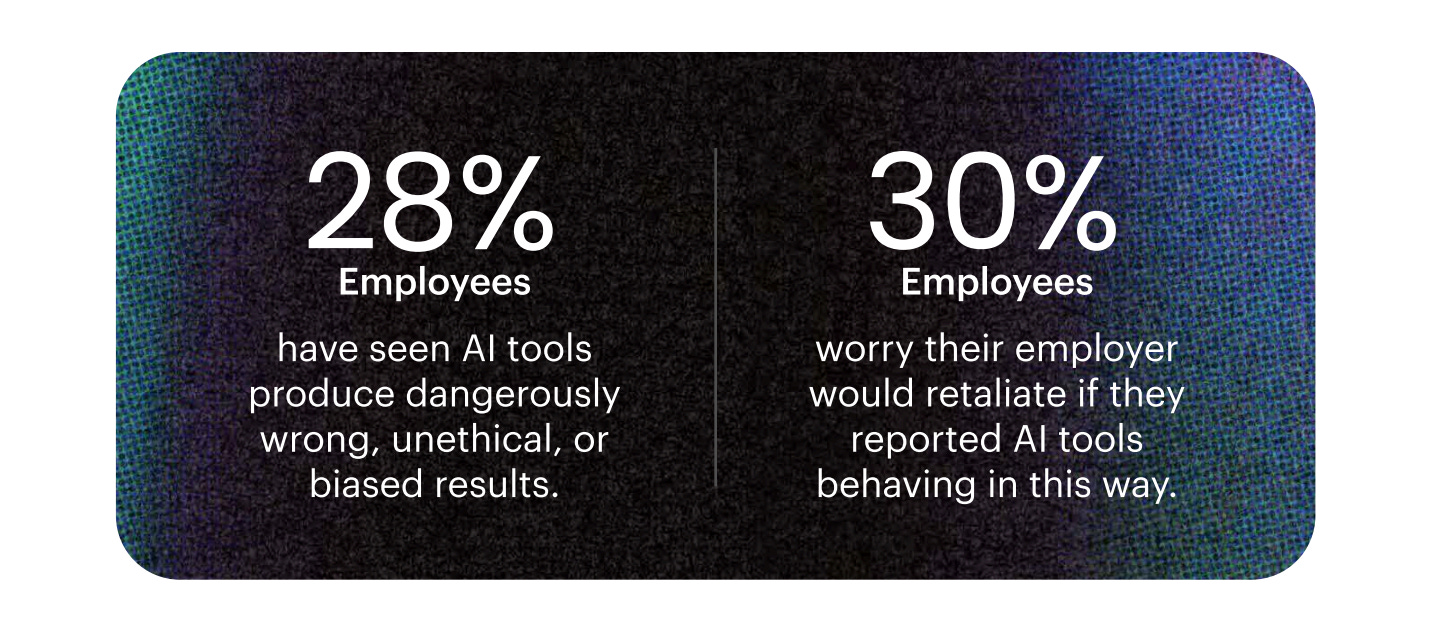

But that assumption depends on cooperation, which is a pretty fragile basis on which to build the future. And it’s clearly starting to fray. The same people tasked with implementing AI are the ones most exposed to its consequences, which creates a contradiction that policy papers tend to smooth over: the rollout depends on the active participation of people who may have no incentive to see it succeed.

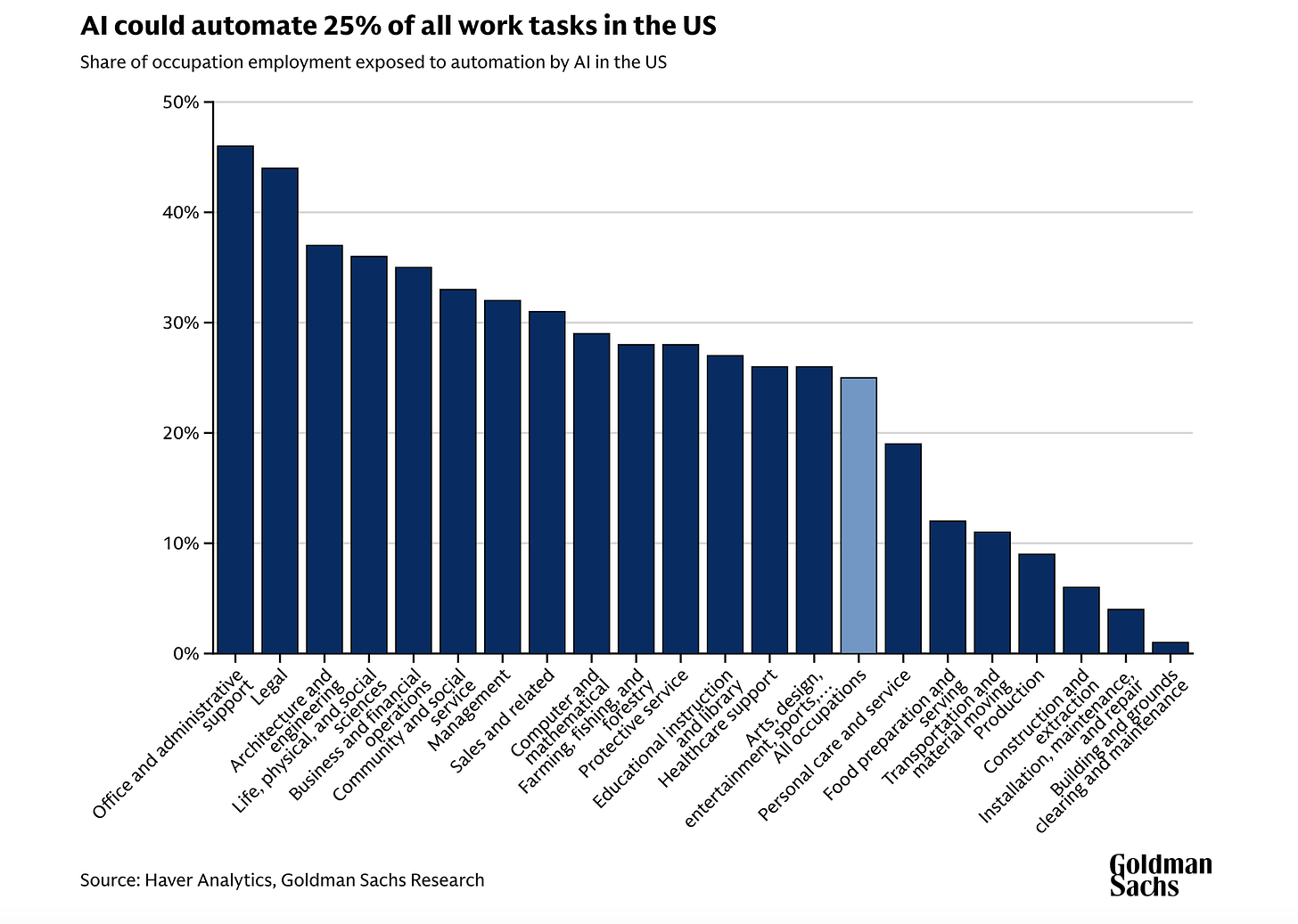

Entry-level roles are already being compressed and a recent Goldman Sachs research study estimates that AI could automate 25% of all work tasks in the US within the next decade.

Tasks that once required teams now require tools. Companies are openly discussing leaner structures, fewer hires, and more automation. For younger workers just entering the system, the signal is hard to miss: rungs on the ladder they’re trying to climb to build a career or the prospect of some semblance of a life are disappearing before their eyes.

From that perspective, adoption looks like replacement, making the response instinctive. If the system you are being asked to build is the one that removes you from it, the rational response is to resist in whatever way possible. So, no matter how small the effort — a carelessly entered prompt, a poorly designed workflow — none of it dramatic, but rather friction-inducing.

Unlike previous waves of disruption, this one moves at a speed that leaves little room for adjustment. Steel workers in America’s Rust Belt saw their lives being eroded over decades. Now, an entry-level role can disappear in months. The timeline between “useful” and “obsolete” is accelerating. That impacts behavior: rather than looking for ways to adapt, they’re playing defense.

There is a tendency to frame this kind of resistance as fear — specifically, what’s now being called FOBO, the fear of becoming obsolete. But fear, in this case, reflects an understanding — sometimes clearer at the ground level than at the policy level — of what is actually happening.

Systems, no matter how advanced or “intelligent,” still depend on human inputs at critical points. Data has to be labeled. outputs have to be trusted, workflows have to be followed. If those inputs degrade — even slightly, even inconsistently — the system degrades with them.

The question is not whether AI will advance. Of course, it will. The question is whether the timeline being modeled — in policy papers, investor decks, and corporate strategy sessions — accounts for the simplest but perhaps most powerful variable in the system: people.

Individuals can make daily decisions about how much to comply, how much to engage, and how much to quietly resist. If enough of those decisions tilt in the same direction, something subtle but consequential could happen.

The future could arrive more slowly than expected, or not at all in the form it was designed.

I do round trips daily between “AI is gonna take all ‘air-conditioned’ jobs,” to, “no way something this unreliable can be trusted for any meaningful task without human supervision”. Fast is not the same as right. Guess I need to step up to a paid version to unleash the apocalypse. 😳

If you were to take AI to it's final all powerful iteration, and you have to really imagine what it would look like... if these agents did evolve to something unrecognizable and beyond our comprehension, so powerful, efficient, god-like...

Would they "manage humanity" for our own good, for our survival, since we seem to be well, fatally flawed and self destructive and possibly capable of our own extinction? Many SF. writers have written about this, notably Neal Asher and I've pondered it many times in my fertile imagination.

We live in amazing times, SF dreams coming true, AI evolving, miraculous technology, and yet I refuse to believe in the endgame, of the technocratic controlled digital prison. We're not the masters we thought we were, above Nature, and we're bound by our intrinsic design to be a part of and stewards of the web of life.

I can imagine a different future where AI could save us, not by being our master but by allowing us to master ourselves in harmony with Nature.

So I was heartened to see in your article a new form of resistance emerging, like nature fighting back, like viral escape, like adaptation, in order to survive.